Punchy

An AI boxing assistant for amateur boxers to improve their skills

Context

In 2020, I started experimenting with tinyML models when TensorFlow began supporting the Arduino Nano BLE Sense. The idea of running machine learning models directly on embedded devices was exciting because it meant data wouldn't need to leave the device—protecting user privacy. I created a fun prototype: a punch classifier inspired by fitness trackers but designed to work entirely offline without sharing any personal data. I aimed to build an exercise simulator that could function independently, offering smart features without compromising privacy.

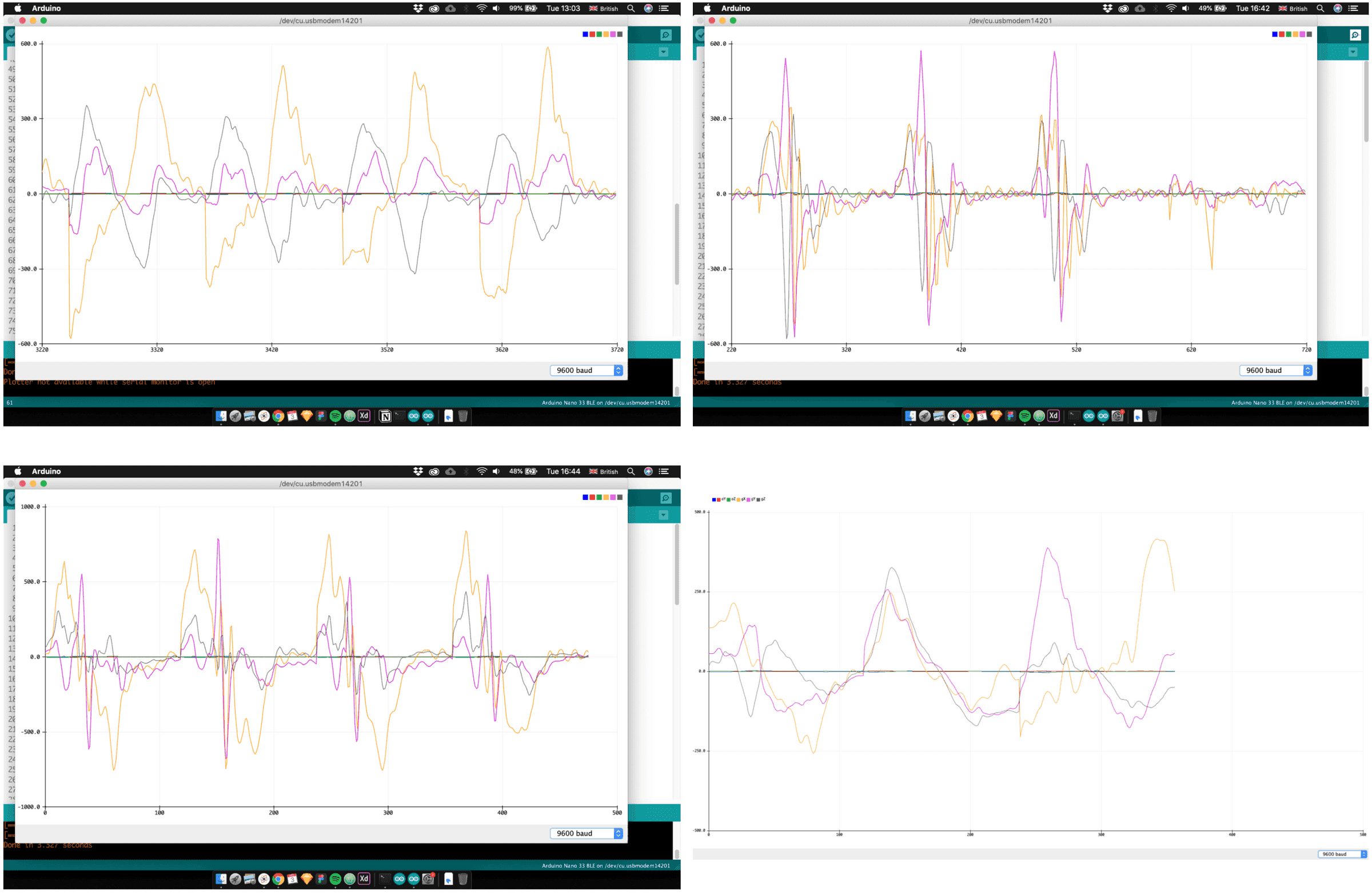

Training data

To train the model I needed to know how the hand moved. So I collected 6 parameters in a single punch sample: the X, Y, Z positions using the onboard accelerometer and the orientations using the gyroscope. The first three images above are graphical representations of what a Jab, a hook & an uppercut look like from a sensor's perspective. The last image shows all of them plotted in the same graph.

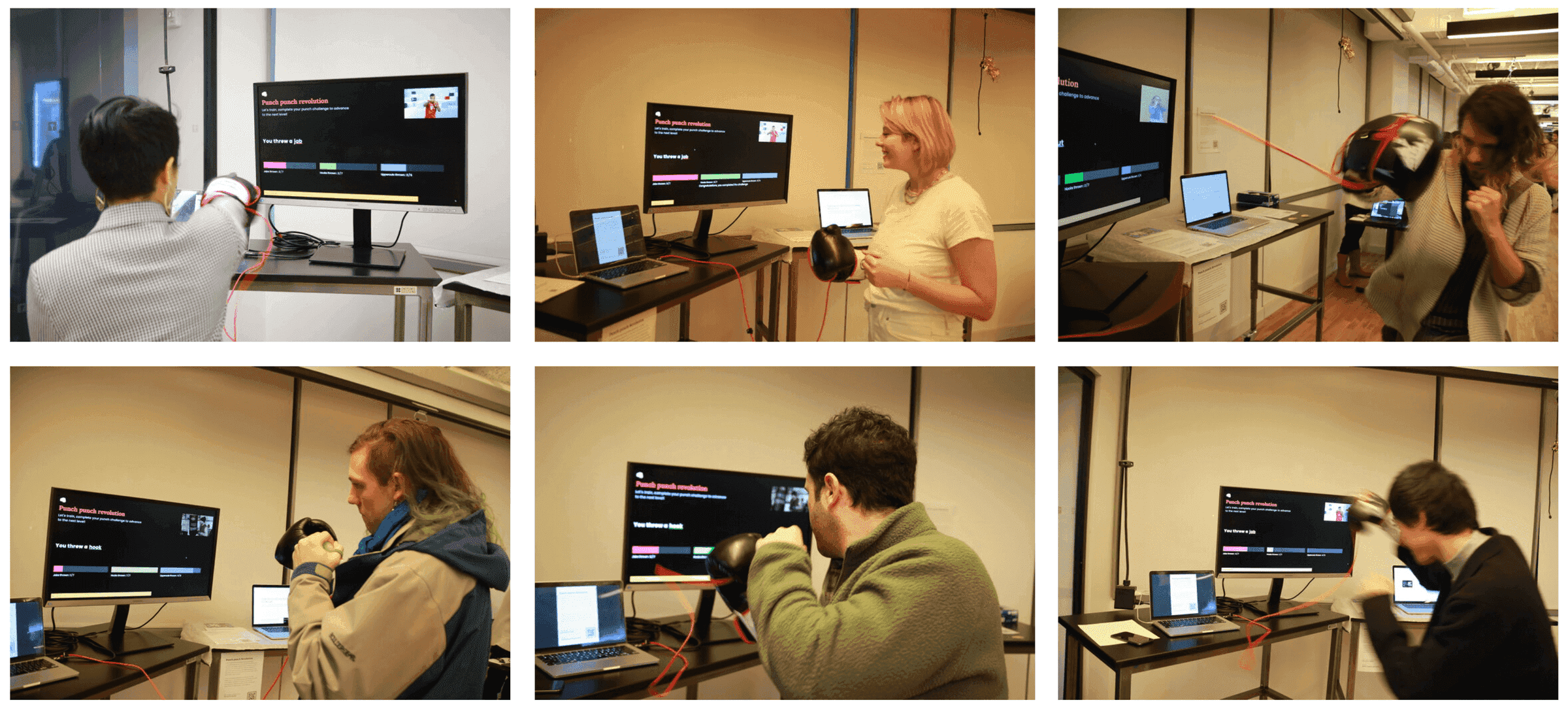

Showcase

I had a chance to showcase my project at the ITP winter show, which let me put the project up for 100+ potential users and get feedback on the interactions. The interactions weren't super precise and needed much improvement—I hope this will happen as the tech improves, as this was one of the first applications of the tech and hardware. You can see me presenting it on the Coding Train YouTube channel.